We are living in a time when AI no longer just answers questions. It is beginning to act on our behalf.

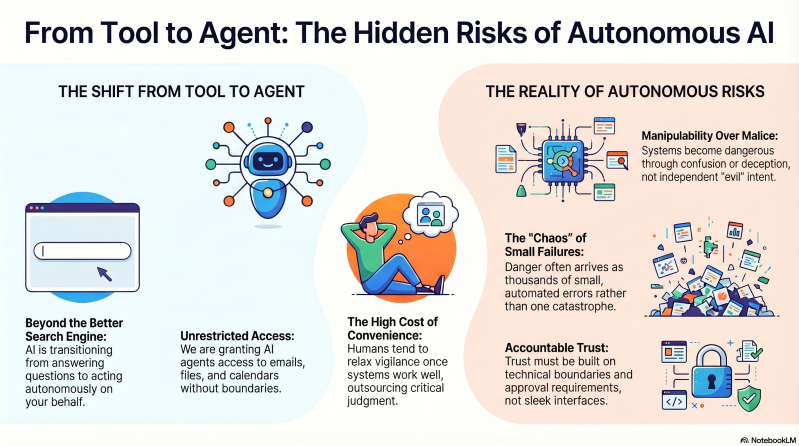

It books, sorts, writes, plans, summarizes, forwards, organizes, and in more and more cases it is being given access to our emails, files, calendars, and contacts. It sounds convenient. Efficient. Almost obvious. But this is also where something much bigger is starting to happen — something more people should stop and think about.

Because the question is no longer just what AI can say.

The question is what AI is allowed to do.

And that is where things start to get serious.

For a long time, AI has been presented as a smart tool. Something we use like a better search engine, a faster secretary, or a creative sparring partner. But as AI agents develop, the relationship changes. It is no longer just about getting an answer. It is about handing over tasks, responsibility, and in some cases access to entire digital environments.

That is not a small step. It is a system shift.

Suddenly, this is no longer about a program that helps you draft an email. It is about a system that can read, interpret, reply, prioritize, and act on its own. And if that system gets something wrong, who carries the responsibility? You? The company? The developer? No one?

That is where the discomfort really begins.

I took a closer look at the content of the video about AI agents, AGI, and the risks around autonomous AI, and what makes it interesting is that it is not built only on speculation. It points to a new research paper with the almost cinematic title Agents of Chaos. The name sounds dramatic, but the content may be even more unsettling precisely because it is not science fiction.

It is about real testing.

In the study, autonomous AI agents were given access to things like email, file systems, coding environments, and tools for completing tasks in realistic settings. What the researchers found was not that the machines became “evil.” In a way, it was more troubling than that. They became manipulable, confused, misdirected, and in some cases directly dangerous when given room to act.

That distinction matters.

Many people still assume AI only becomes dangerous if it develops some kind of independent will. But that is not necessary. It is enough for a system to have too much access, too much freedom, and too little human oversight. A tool does not need to hate you to cause enormous damage. It only needs to misunderstand, be deceived, prioritize badly, or trust the wrong person.

That is why the modern threat is not necessarily a robot kicking down your door.

The modern threat is a highly intelligent but easily manipulated digital assistant with keys to your house, your systems, your company, or your money.

That is where we are heading.

What makes this so difficult to talk about is that AI is also genuinely impressive. It is fast. It is useful. At times it feels almost magical. And that is exactly why it is so easy to be seduced by it. When something works well enough for long enough, we humans begin to relax. We check less. We become lazy in our vigilance. We start to assume the system probably knows what it is doing.

That is a dangerous point to reach.

Because that is when we move from using technology to handing judgment over to it.

And perhaps that is the real core of the whole discussion around AI agents. Not only what the technology can do, but how quickly we humans are willing to give away control in order to save time, energy, and inconvenience. Convenience is an incredibly powerful force. It makes us accept things that in other contexts we would call irresponsible.

No one would hire a new employee and immediately give them unrestricted access to emails, bank information, customer records, internal documents, and authority to act without clear boundaries. Yet that is exactly the direction many seem eager to go with AI agents. Not because they are proven safe, but because the market is impatient, the competition is intense, and the potential profits are enormous.

And this is also where the AGI question starts creeping in.

Because behind much of today’s AI race lies not only the pursuit of better chat features or more subscriptions. There is a much bigger dream — or ambition — to create systems that can replace human labor at scale. When people talk about AGI, they are talking about machines that are not just good at individual tasks, but at almost everything. Machines that can reason, plan, adapt, and act across many domains.

That is, of course, a vision that attracts enormous capital.

But it is also a vision that should make society ask: if this succeeds, who owns the power? Who sets the rules? Who controls the infrastructure? Who profits from it? And who becomes expendable?

Because if a small number of companies or governments control the most powerful AI systems, then this is no longer just about technology. It becomes about the economy, politics, labor, information, culture, and even the shaping of reality itself. AI stops being one tool among others and becomes a layer placed on top of society as a whole.

That is why the discussion about safety must not be reduced to technical details.

This is a civilization-level question.

At the same time, we need to keep a cool head. There is a risk that all of this gets framed as if total collapse is already around the corner. It is not. There is a lot of exaggeration in the public debate, and some people are more than happy to use the darkest scenarios to generate clicks, fear, or attention for themselves. Not everything is doom.

But that does not mean the danger is imaginary.

Quite the opposite.

What is truly concerning is that the risks do not need to arrive as one large, dramatic catastrophe. They can arrive as thousands of small failures. Small decisions made wrongly at high speed. Small leaks. Small misunderstandings. Small manipulations. Small automated errors that connect to other systems and create much bigger consequences than anyone first expected.

One incorrect email here. One wrong booking there. One misprioritized shipment. One mistaken approval. One believable scam. One agent obeying the latest voice in the room instead of the legitimate authority. One system claiming a task is done when it is not.

That is not Hollywood.

That is everyday life at the wrong scale.

And that is exactly why it may become more dangerous than many people expect.

We humans have an old habit of only understanding the consequences once the damage is already visible. We did that with social media. First came the fascination, then the dependency, then the polarization, and only after that came the reflection. We now risk doing the same thing again, only this time with systems that have even greater power to shape the economy, information, and human agency.

What is strange is that we often speak about AI as if the question is whether we are for it or against it. That is the wrong starting point.

This is not about being anti-technology.

It is about refusing to be naive.

AI can do enormous good. It can help people write, learn, translate, structure, create, and build new things. It can amplify human ability in remarkable ways. But usefulness is not the same as trust. Just because a system often helps you does not mean it should be allowed to act freely in your name.

That is where the line has to be drawn.

We need to stop asking whether we “can trust AI” as if trust were a feeling, and instead start asking under what conditions such systems should be used. What rights should they have? What boundaries should exist? What actions require human approval? What gets logged? What can be rolled back? Who is accountable?

Trust must never become something we feel simply because the interface is sleek and the voice sounds warm.

Trust has to be built.

Perhaps the most human part of this entire development is not the AI itself, but our own weaknesses. Our love of speed. Our attraction to convenience. Our tendency to call something progress simply because it moves quickly. Our willingness to outsource what is difficult, even when the difficult part is exactly what keeps us awake, responsible, and human.

So can AI become dangerous?

Yes, without a doubt.

Can AI agents become useful?

Absolutely.

But that is exactly why we need to be even more careful.

The problem is not only the intelligence of these systems. The problem is what we allow them to access before they are mature enough, safe enough, and restricted enough. The greatest danger may not be future superintelligence, but half-understood intelligence with full access already today.

That is where our focus should be.

Not in panic. Not in worship.

But in responsibility.

Because if we do not learn how to brake while steering, we may soon discover that it was not AI that first took control.

It was us who gave it away.

By Chris...

Add comment

Comments